There is a conversation happening right now across every tech podcast, every AI newsletter, every YouTube channel with an opinion and a ring light. The conversation goes like this: prompts are dying. Natural language will replace them. Soon you will just talk to AI the way you talk to a friend, and it will know what you want without being told.

This is partially true. It is also fundementally wrong. And the distance between those two things is where the entire future of human-machine collaboration lives.

The people making this argument are, almost without exception, people who use AI to chat. They ask it to write emails. Summarize articles. Generate images of cats in Renaissance paintings. For them, the prompt is a text box. And they are correct that the text box is becoming less important. Voice will replace typing. Context will replace explanation. The AI will learn your preferences and anticipate your needs before you articulate them.

But there is another class of people. A smaller, quieter class. People who are not chatting with AI. They are building with it. They are constructing systems where AI operates autonomously, makes decisions, handles customers, manages workflows, and runs businesses. For these people, the prompt is not a text box. It is an operating system. And operating systems do not disapear when the interface improves. They become more important.

The question is not whether prompts will die. The question is what prompts will become. And the answer, traced forward across the same exponential curves that govern everything else in this field, is something no one in the current conversation is describing accurately.

The Baseline: What a Prompt Actually Is

To understand where prompts are going, you have to be honest about what they are today.

In February 2026, most people think of a prompt as a sentence you type into ChatGPT. "Write me a cover letter." "Explain quantum physics to a five-year-old." "Make this email sound more professional." This is the surface layer. It is real. It is also the shallowest possible understanding of what is happening.

Underneath that surface, a different kind of prompting exists. It looks nothing like a chat message.

I built an autonomous operating system for service businesses. One of the clients of that system is a fitness coaching company that manages ~500 clients autonomously. An AI handles their nutrition plans, their workout routines, their scheduling, their billing questions, their complaints, their recipe requests, their supplement questions. It does this across chat, email, and video call follow-ups. Twenty-four hours a day, seven days a week.

The document that controls this system is ~100,000 characters long. It contains ~200 rules, called rails, each one born from a specific failure with a specific client. When the AI told a client the wrong portion size, that became a rail. When it promised to fix something and didn't, that became a rail. When it apologized for a billing error that was not our fault, that became a rail. When it confused cooked rice with raw rice and told a client his meal was wrong when it was correct, that became a rail.

That document is a prompt. It is also the most valuable intellectual property in the business. Not the code. Not the database. Not the interface. The prompt. Because without it, the AI is a brilliant employee who has never been trained, doesn't know the company policy, and will confidently do the wrong thing with perfect grammar.

A hundred thousand characters of instructions, constraints, rules, edge cases, and accumulated operational knowledge. That is what a prompt looks like when you are not chatting. That is what a prompt looks like when you are building.

And that is the baseline from which we project forward.

The History: Instructions All the Way Down

The relationship between humans and machines has always been mediated by instructions. The form changes. The substance does not.

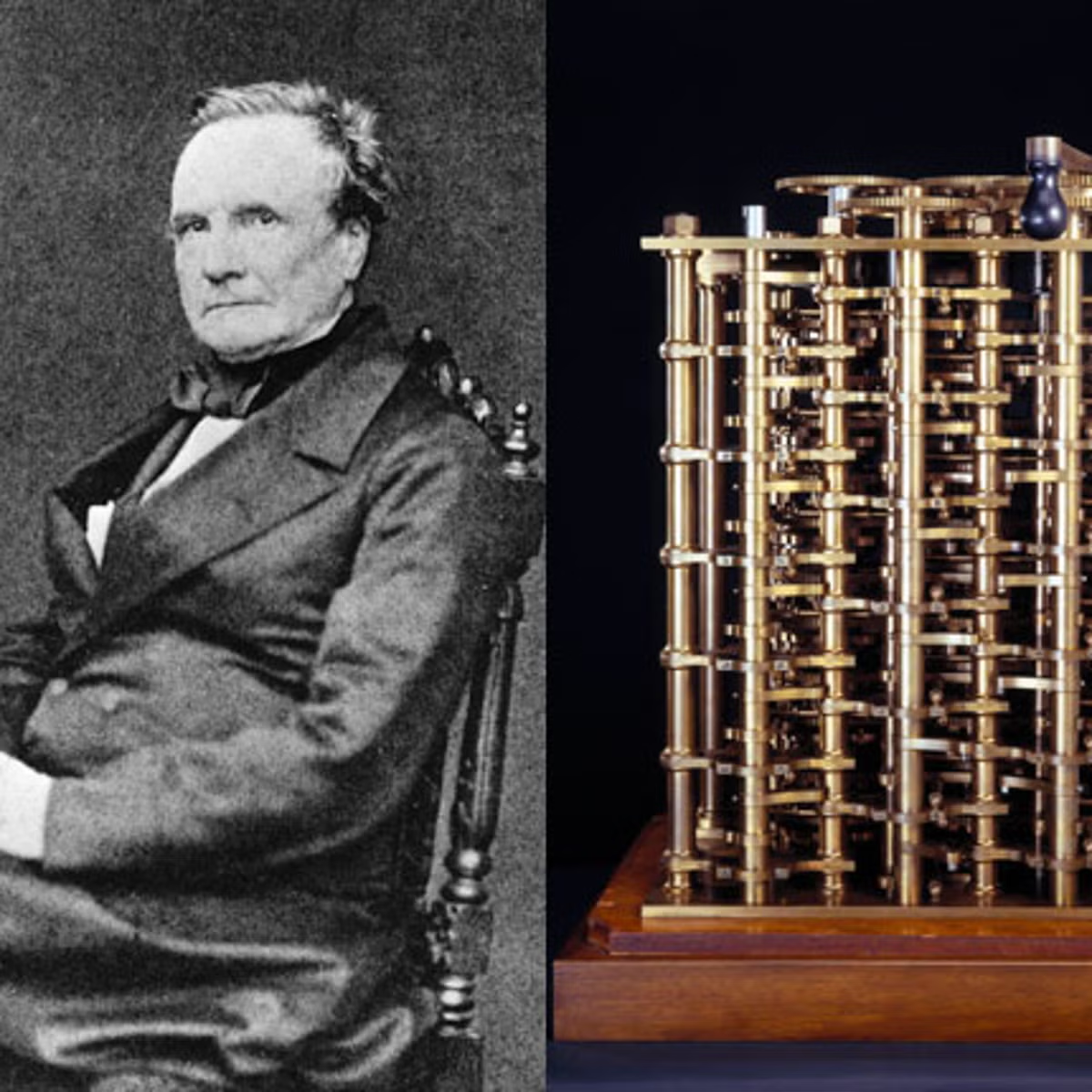

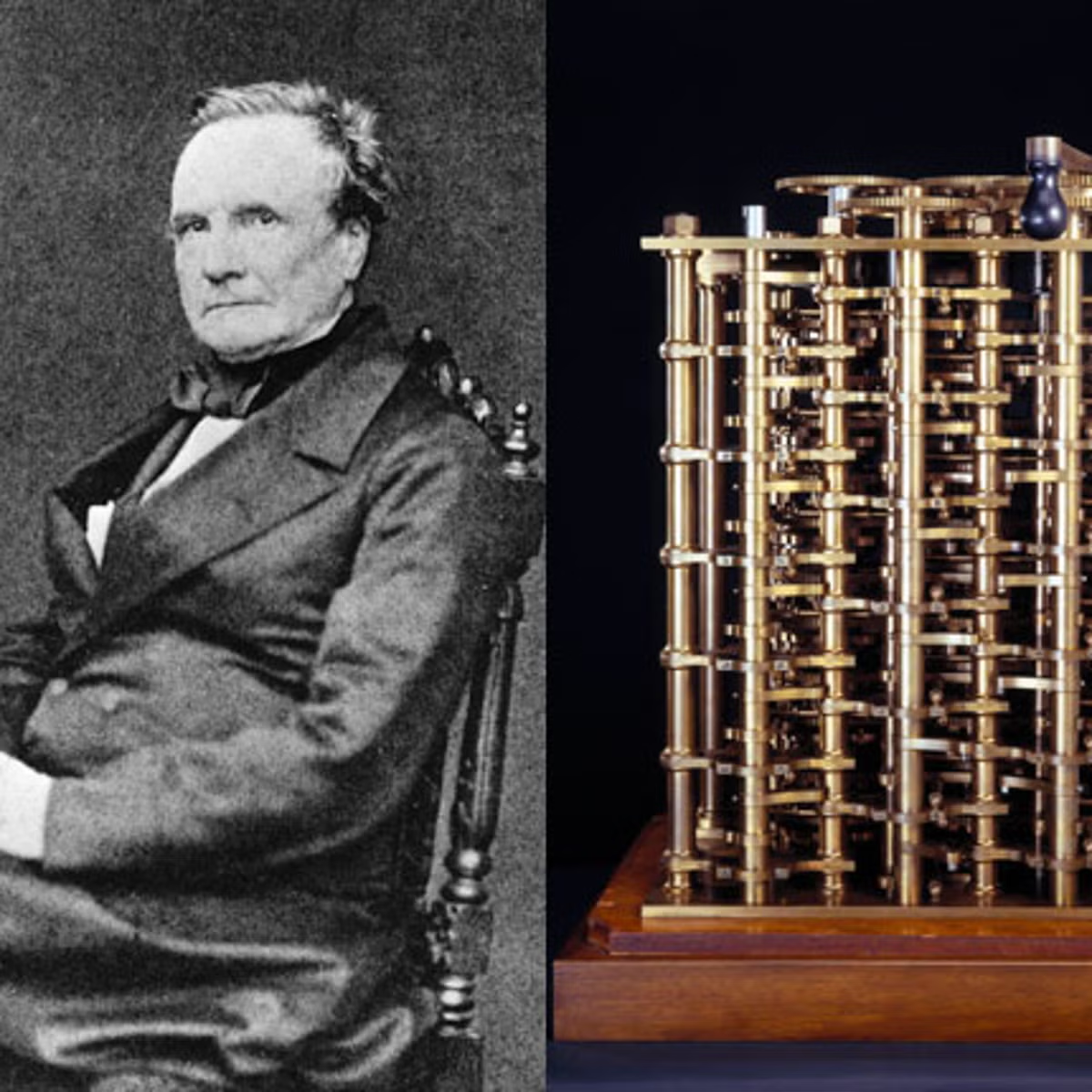

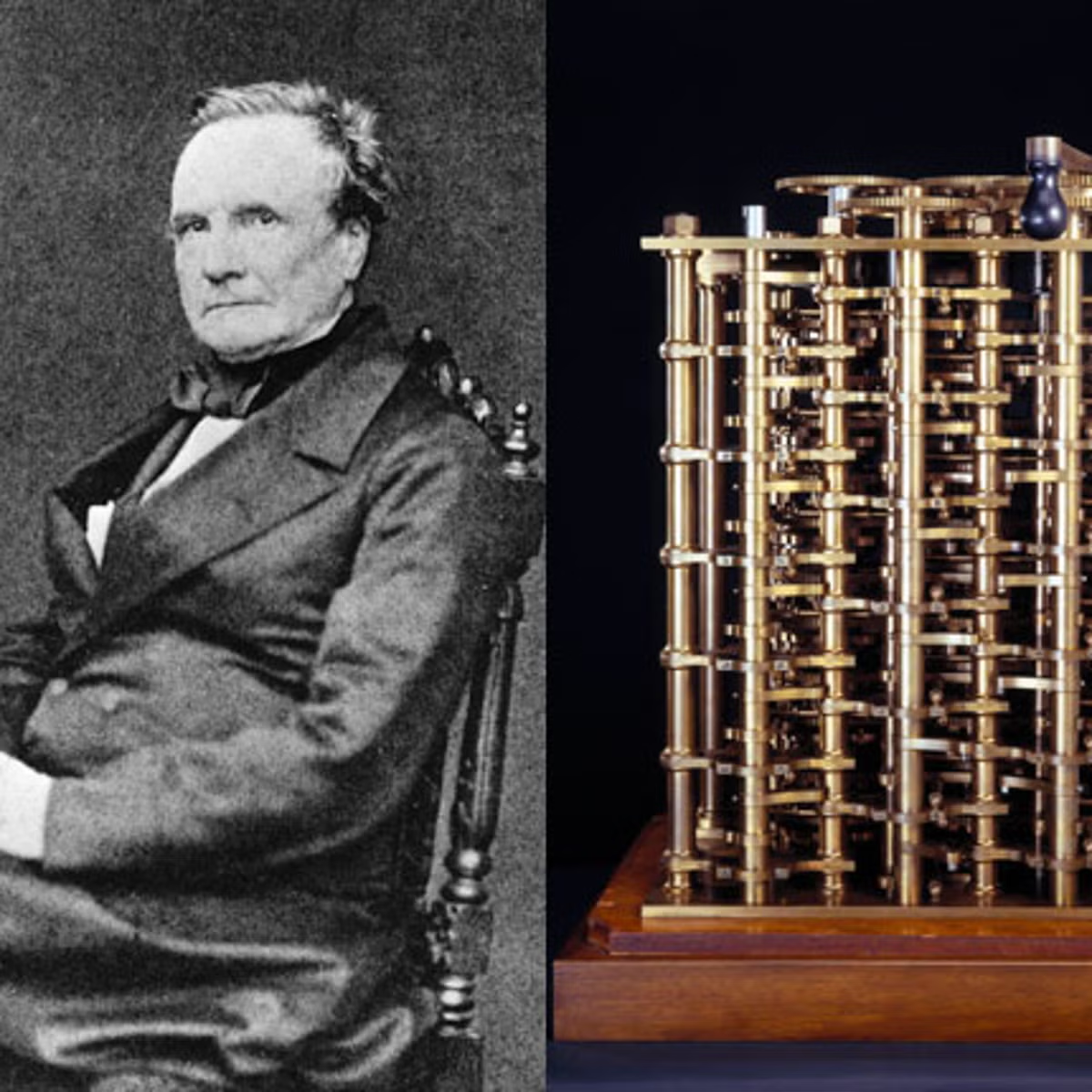

In 1843, Ada Lovelace wrote what is generally recognized as the first computer program: a sequence of operations for Charles Babbage's Analytical Engine to compute Bernoulli numbers. The machine was never built. The program was never executed. But the concept was established: a human must tell a machine, in precise and structured language, exactly what to do.

In the 1950s, programmers communicated with computers through punch cards. Each card was a physical instruction, a hole in a specific position telling the machine to perform a specific operation. The language was binary. The precision required was absolute. A misplaced hole meant a failed program.

By the 1970s, programming languages like C abstracted the punch cards into human-readable syntax. You no longer needed to speak binary. But you still needed to speak C, which is to say, you still needed to translate your intent into a language the machine could parse. The abstraction rose. The requirement for precise human instruction did not change.

By the 2000s, Python and JavaScript made programming accessible to millions more people. The syntax became simpler. The barrier to entry dropped. But the core reality remained: if you wanted a machine to do something specific, you had to tell it, in structured language, exactly what that specific thing was.

Now, in 2026, we have reached a new abstraction layer. You can speak to a machine in English. In Spanish. In any natural language. The machine understands. The punch cards are gone. The syntax is gone. The barrier is the lowest it has ever been.

And people look at this and say: "The instructions are gone."

They are not gone. They are invisible. Which is a completley different thing.

Every time the abstraction layer rises, the instructions become less visible but more powerful. A Python script is less visible than a punch card but controls more. A natural language prompt is less visible than a Python script but directs more. The trajectory is consistent across 180 years of computing: the interface simplifies, the underlying instruction set grows in sophistication and scope.

The YouTubers are watching the interface. They should be watching the instruction set.

The Two Layers

Here is the distinction that the current conversation misses entirely.

There are two kinds of prompts, and they are evolving in opposite directions.

Layer 1: The conversational prompt. "Write me an email." "Summarize this document." "What should I have for dinner?" This layer is indeed dying in its current form. Within five years, you will not type these requests. You will speak them, gesture them, or simply have them anticipated by an AI that knows your patterns. The text box goes away. The friction goes away. The prompt, in the sense of a deliberate human instruction typed into an interface, goes away.

The YouTubers are right about Layer 1. Completely right.

Layer 2: The operational prompt. The ~100,000-character document. The system instructions. The rails. The rules that tell an AI how to behave when a client wants to cancel, what to say when someone asks for a refund, how to handle a billing dispute, when to escalate to a human, what tone to use, what promises it can make, what promises it absolutely cannot make. This layer is not dying. It is exploding.

Layer 2 is where the actual value lives. And Layer 2, traced forward along the same exponential curve that governs everything else in AI, does not simplify. It compounds.

Every error an AI system makes in production creates a new rule. Every edge case creates a new constraint. Every client interaction that goes wrong creates a new rail. The operational prompt does not shrink with time. It grows. It grows because the real world is infinitely complex, and an autonomous AI operating in the real world encounters that complexity continuously.

The system had 3 rails when it launched. Within months, it had ~200. That is not a temporary growth phase. That is the nature of the thing. The prompt is not a document you write once. It is a living system that absorbs the lessons of every failure and encodes them as instructions that prevent the next failure.

This is what I call the Rail Principle: every error becomes a rule. Every rule becomes a rail. Every rail reduces the probability of the next error. The prompt is not a starting point. It is the accumulated intelligence of every mistake the system has ever made, compressed into instructions that make the system smarter.

And soon, it will not be the human building the rails. It will be the AI itself. Not on its own terms, but on the principles its creator defined: what matters, what does not, what is acceptable, what is not. The AI will learn these principles over the course of its life alongside its human, absorbing them through thousands of interactions until they become instinct. And eventually, the human steps out of the picture entirely. Not because the human is no longer needed, but because there is nothing left that the AI cannot anticipate about its creator. The rails keep being built. The builder is no longer in the room. But the builder's fingerprints are on every rail.

The people saying prompts are dying are looking at Layer 1 and extrapolating to Layer 2. That is like watching self-driving cars eliminate the need for turn signals and concluding that steering wheels are obsolete.

The steering wheel is not going away. It is changing hands. The human will not be the one driving, but the vehicle will still need direction. The AI becomes the driver, and the prompt becomes the road the driver was trained to follow.

The Exponential: Two Years

2028: The prompt becomes the product

By 2028, the conversational prompt is effectively gone. AI assistants are ambient. They live in your earbuds, your glasses, your car, your home. They respond to voice, gesture, gaze, and context. Asking an AI to do something feels no different from asking a colleague. The interface friction that defined the 2022-2026 era has evaporated entirely.

And simultaneously, the operational prompt has become the single most valuable asset in any AI-powered business.

The market has figured out something that in 2026 only a handful of builders understand: the AI model is a commodity. Everyone has access to the same models. GPT, Claude, Gemini, Kimi, open-source alternatives, they are all available, all capable, all roughly equivalent for most tasks. The differentiator is not the model. The differentiator is the instructions.

Two businesses using the same AI model to handle customer service will produce radically different outcomes based entirely on the quality, depth, and precision of their operational prompts. One has 30 rails built from 30 real failures. The other has a generic system prompt copied from a blog post. The first business retains 94% of its customers. The second loses 40% to AI-generated mistakes that a single rail would have prevented.

By 2028, operational prompts are treated the way trade secrets are treated: proprietary, protected, and neccesary. Companies do not share their system prompts any more than Coca-Cola shares its formula. Not because the syntax is secret, but because the accumulated operational knowledge encoded in those prompts represents years of real-world learning that cannot be replicated without years of real-world failure.

The prompt is the product. The model is the electricity.

Venture capital has noticed. By 2028, the question investors ask is not "what model do you use?" It is "how many rails do you have?" Because rails are a proxy for operational maturity, for real-world deployment, for the kind of battle-tested knowledge that only comes from running an AI system in production and fixing what breaks.

The conversation about prompts dying sounds, by 2028, the way conversations about websites being unnecessary sounded in 2005. Technically coherent from a narrow angle. Catastrophically wrong from every other one.

The Exponential: Four Years

2030: The prompt learns to write itself

The most significant development in the prompt ecosystem between 2026 and 2030 is not that prompts get longer or more complex. It is that they begin to self-modify.

In 2026, when my system makes an error, I write a rail. A human identifies the failure, diagnoses the root cause, formulates the rule, and adds it to the document. The human is in the loop at every step.

By 2030, that loop has been fully automated. The AI systems monitoring production identify the failure. A seperate AI system diagnoses the root cause. A third system builds the new rail. No human reviews it. No human approves it. The AI already knows what its creator would want, because it spent a decade learning the principles behind every correction the creator ever made.

This is a profound shift. The prompt has gone from being something a human writes to something an AI generates from internalized principles. The human role has not moved from author to editor. The human has left the room entirely. Not because the human was pushed out, but because there is nothing left for the human to say that the AI has not already anticipated.

The operational prompt of 2030 is not a document. It is a knowledge graph. A living, interconnected web of rules, constraints, edge cases, and learned behaviors that updates continuously based on real-world outcomes. It has thousands of rails, not because a human sat down and wrote thousands of rules, but because the system has been operating in the real world for four years and every significant failure has been automatically captured, analyzed, and encoded.

The casual user of 2030 has no idea this system exists. They talk to their AI and it just works. It knows what they want. It handles their requests correctly. It avoids mistakes. They think the AI is simply smart. They do not see the invisible architecture of four years of accumulated operational intelligence sitting behind every interaction.

And this is the irony the YouTubers of 2026 never anticipated: the prompts did not disappear. They became invisible. They became so deeply embedded in the operation of every AI system that no one sees them anymore. But they are there. They are everywhere. And they are the reason the AI works.

The Exponential: Nine Years

2035: The prompt merges with the model

By 2035, the distinction between "the prompt" and "the model" has become philosophically questionable.

In 2026, the model and the prompt were clearly separate things. The model was the base intelligence, trained on internet-scale data, capable of general reasoning. The prompt was the overlay, the set of specific instructions that directed that general intelligence toward a particular task. You could swap the model and keep the prompt. You could swap the prompt and keep the model. They were independent.

By 2035, this seperation has dissolved. The operational knowledge that in 2026 lived in an external document now lives inside the model itself. Not through traditional training, but through a process of continuous integration where the model internalizes the patterns encoded in the prompt over millions of interactions.

The model does not need to be told "never apologize for billing errors that are not our fault" because it has internalized, through years of reinforcement, that this behavior produces negative outcomes. It does not need a rail for it. The rail has become a reflex.

But here is the critical point: someone had to write that first rail. Someone had to identify the failure, diagnose the cause, and encode the correction. The fact that the rail eventually became automatic does not mean the rail was unneccesary. It means the rail worked so well that it became invisible.

This is the pattern of all successful instructions: they begin as explicit rules and end as implicit behavior. Traffic laws started as written rules. Now they are reflexes. You do not consciously think "red means stop." You just stop. The rule became invisible. But it is still there, encoded in your behavior, and it originated as an explicit instruction from another human.

The prompts of 2035 have completed this same journey. They began as external documents. They evolved into self-modifying knowledge graphs. They ended as internalized model behavior. At every stage, they were neccesary. At every stage, they were the accumulated wisdom of human intent, compressed into a form that machines could execute.

The people of 2035 do not call them prompts. They call them something else, maybe "operational DNA," maybe "behavioral architecture," maybe something we do not have a word for yet. But the function is identical: precise human intent, encoded in a form that directs artificial intelligence toward outcomes that humans actually want.

The Exponential: Twenty-Four Years

2050: The steering wheel becomes the destination

Twenty-four years out is where this trajectory produces its most counterintuitive result.

The AI systems of 2050 are, by every measurable standard, more intelligent than humans. They reason better. They create better. They solve problems humans cannot formulate, let alone answer. The capability gap between human and artificial intelligence in 2050 is wider than the gap between humans and chimpanzees today.

And yet.

The most valuable component of every AI system in 2050 is not a set of human-written instructions. Those are long gone. It is something deeper: the internalized principles of the human who first built the system. The AI does not follow rules anymore. It follows instincts, instincts that originated as rules, written by a human, decades ago. The human is nowhere in the process. But the human is everywhere in the result.

The form has changed beyond recognition. The ~100,000-character document of 2026 would look to a 2050 system the way a punch card looks to a 2026 programmer. Primitive. Charming. Historically significant. But the function, a human telling a machine what it is FOR, has not changed. Because that function is not a limitation of the technology. It is a feature of the relationship.

Intelligence without direction is not useful. It is not even meaningfully intelligent. A mind that can do anything but has no concept of what it should do is not powerful. It is lost. The prompt, in its most evolved form, is not a constraint on intelligence. It is the thing that makes intelligence purposeful.

Clarke understood this about human intelligence: that its purpose was not the intelligence itself, but what the intelligence was directed toward. The stepping stone was not the destination. But the direction of the stepping, the intent behind the movement, that was always human.

The prompt is the intent. And intent does not become obsolete when capability increases. It becomes more important. Because the more powerful the system, the more consequential the direction.

A car going five miles an hour does not need a steering wheel. You can course-correct with your feet. A car going five hundred miles an hour needs the most precise steering mechanism ever engineered. The faster you go, the more the steering matters.

AI is accelerating. The steering wheel is not going away. It is changing hands. And the hands that hold it next will have been trained by the ones that held it first.

Conclusion: The Steering Wheel

The people who say prompts are dying are watching someone parallel park and concluding that steering wheels are unnecessary because the car barely moves.

They have never driven at speed. They have never built a system that handles ~500 clients autonomously while they sleep. They have never watched an AI promise a client something impossible because a single missing instruction allowed it to. They have never spent a Tuesday afternoon writing a rule that says "never confuse cooked rice with raw rice" because an AI hallucinated a nutrition fact and confused a real person paying real money for a real service.

They chat with AI. They do not build with it. And the distance between those two activities is the distance between sitting in a parked car and driving on a highway.

The prompt is the steering wheel of artificial intelligence. As the vehicle gets faster, more powerful, more autonomous, the steering does not disappear. It changes hands. The human taught the AI how to drive. And now the AI drives.

The prompt is not the text box. The prompt is the human intent encoded in any form the machine can receive. And human intent, directed at machine capability, is not a phase of technology.

It is the point of technology.

Pedro Meza is the co-founder of Lyrox, an autonomous AI operating system for service businesses. He wrote this essay in February 2026 in conversation with Claude, which helped him articulate what he had already learned from building: that the instructions matter more than the intelligence they direct.

The Rail Principle, the operational framework referenced in this essay, was formalized on February 6, 2026. As of this writing, it contains ~200 rails.

A note on the misspellings.

If you noticed errors scattered through this essay, they are intentional. Eight words are misspelled, and they are left there for the same reason as the previous essay. This is a document about human intent directing machine capability. A machine would not make these errors. A human does. The misspellings are not mistakes. They are proof of authorship. They are the fingerprint of the biological mind that first held the steering wheel.

Hay una conversación ocurriendo ahora mismo en cada podcast de tecnología, cada newsletter de inteligencia artificial, cada canal de YouTube con una opinión y un aro de luz. La conversación dice así: los prompts van a morir. El lenguaje natural los va a reemplazar. Pronto le vas a hablar al AI como le hablas a un amigo, y va a saber lo que quieres sin que se lo digas.

Esto es parcialmente cierto. También es fundamentalmente incorrecto. Y la distancia entre esas dos cosas es donde vive el futuro entero de la colaboración entre humanos y máquinas.

Las personas haciendo este argumento son, casi sin excepción, personas que usan AI para chatear. Le piden que escriba emails. Que resuma artículos. Que genere imágenes de gatos en pinturas del Renacimiento. Para ellos, el prompt es una caja de texto. Y tienen razón en que la caja de texto se está volviendo menos importante. La voz va a reemplazar el teclado. El contexto va a reemplazar la explicación. El AI va a aprender tus preferencias y anticipar tus necesidades antes de que las articules.

Pero hay otra clase de personas. Una clase más pequeña, más silenciosa. Personas que no están chateando con AI. Están construyendo con él. Están creando sistemas donde el AI opera de forma autónoma, toma decisiones, atiende clientes, maneja flujos de trabajo y administra negocios. Para estas personas, el prompt no es una caja de texto. Es un sistema operativo. Y los sistemas operativos no desaparecen cuando la interfaz mejora. Se vuelven más importantes.

La pregunta no es si los prompts van a morir. La pregunta es en qué se van a convertir. Y la respuesta, trazada hacia adelante a lo largo de las mismas curvas exponenciales que gobiernan todo lo demás en este campo, es algo que nadie en la conversación actual está describiendo con precisión.

La Línea Base: Qué Es Realmente un Prompt

Para entender hacia dónde van los prompts, hay que ser honesto sobre lo que son hoy.

En febrero de 2026, la mayoría de la gente piensa que un prompt es una oración que escribes en ChatGPT. "Escríbeme una carta de presentación." "Explícame la física cuántica como si tuviera cinco años." "Haz que este email suene más profesional." Esta es la capa superficial. Es real. También es la comprensión más superficial posible de lo que está ocurriendo.

Debajo de esa superficie, existe otro tipo de prompting. No se parece en nada a un mensaje de chat.

Yo construí un sistema operativo autónomo para negocios de servicios. Uno de los clientes de ese sistema es una empresa de coaching fitness que gestiona ~500 clientes de forma autónoma. Un AI maneja sus planes de nutrición, sus rutinas de ejercicio, sus agendas, sus preguntas de facturación, sus quejas, sus solicitudes de recetas, sus preguntas sobre suplementos. Lo hace a través de chat, email y seguimientos de videollamadas. Veinticuatro horas al día, siete días a la semana.

El documento que controla este sistema tiene ~100,000 caracteres. Contiene ~200 reglas, llamadas rieles, cada una nacida de un error específico con un cliente específico. Cuando el AI le dijo a un cliente el tamaño de porción incorrecto, eso se convirtió en un riel. Cuando prometió arreglar algo y no lo hizo, eso se convirtió en un riel. Cuando se disculpó por un error de facturación que no fue nuestra culpa, eso se convirtió en un riel. Cuando confundió arroz cocido con arroz crudo y le dijo a un cliente que su comida estaba mal cuando estaba correcta, eso se convirtió en un riel.

Ese documento es un prompt. También es la propiedad intelectual más valiosa del negocio. No el código. No la base de datos. No la interfaz. El prompt. Porque sin él, el AI es un empleado brillante que nunca ha sido entrenado, no conoce las políticas de la empresa, y va a hacer con confianza lo incorrecto con gramática perfecta.

Cien mil caracteres de instrucciones, restricciones, reglas, casos límite y conocimiento operacional acumulado. Eso es lo que parece un prompt cuando no estás chateando. Eso es lo que parece un prompt cuando estás construyendo.

Y esa es la línea base desde la cual proyectamos hacia adelante.

La Historia: Instrucciones Hasta el Fondo

La relación entre humanos y máquinas siempre ha sido mediada por instrucciones. La forma cambia. La sustancia no.

En 1843, Ada Lovelace escribió lo que generalmente se reconoce como el primer programa de computación: una secuencia de operaciones para que el Motor Analítico de Charles Babbage calculara los números de Bernoulli. La máquina nunca se construyó. El programa nunca se ejecutó. Pero el concepto quedó establecido: un humano debe decirle a una máquina, en lenguaje preciso y estructurado, exactamente qué hacer.

En los años 50, los programadores se comunicaban con las computadoras a través de tarjetas perforadas. Cada tarjeta era una instrucción física, un agujero en una posición específica diciéndole a la máquina que realizara una operación específica. El lenguaje era binario. La precisión requerida era absoluta. Un agujero mal colocado significaba un programa fallido.

Para los años 70, lenguajes de programación como C abstrajeron las tarjetas perforadas en sintaxis legible por humanos. Ya no necesitabas hablar binario. Pero aún necesitabas hablar C, es decir, aún necesitabas traducir tu intención a un lenguaje que la máquina pudiera interpretar. La abstracción subió. El requisito de instrucciones humanas precisas no cambió.

Para los 2000, Python y JavaScript hicieron la programación accesible a millones más de personas. La sintaxis se simplificó. La barrera de entrada bajó. Pero la realidad central permaneció: si querías que una máquina hiciera algo específico, tenías que decirle, en lenguaje estructurado, exactamente qué era eso específico.

Ahora, en 2026, hemos llegado a una nueva capa de abstracción. Puedes hablarle a una máquina en inglés. En español. En cualquier lenguaje natural. La máquina entiende. Las tarjetas perforadas se fueron. La sintaxis se fue. La barrera es la más baja que ha sido jamás.

Y la gente ve esto y dice: "Las instrucciones desaparecieron."

No desaparecieron. Se volvieron invisibles. Lo cual es algo completamente diferente.

Cada vez que la capa de abstracción sube, las instrucciones se vuelven menos visibles pero más poderosas. Un script de Python es menos visible que una tarjeta perforada pero controla más. Un prompt en lenguaje natural es menos visible que un script de Python pero dirige más. La trayectoria es consistente a lo largo de 180 años de computación: la interfaz se simplifica, el conjunto de instrucciones subyacente crece en sofisticación y alcance.

Los YouTubers están mirando la interfaz. Deberían estar mirando el conjunto de instrucciones.

Las Dos Capas

Aquí está la distinción que la conversación actual se pierde por completo.

Hay dos tipos de prompts, y están evolucionando en direcciones opuestas.

Capa 1: El prompt conversacional. "Escríbeme un email." "Resúmeme este documento." "¿Qué debería cenar?" Esta capa efectivamente está muriendo en su forma actual. En cinco años, no vas a escribir estas solicitudes. Las vas a hablar, gesticular, o simplemente van a ser anticipadas por un AI que conoce tus patrones. La caja de texto desaparece. La fricción desaparece. El prompt, en el sentido de una instrucción humana deliberada escrita en una interfaz, desaparece.

Los YouTubers tienen razón sobre la Capa 1. Completamente razón.

Capa 2: El prompt operacional. El documento de ~100,000 caracteres. Las instrucciones del sistema. Los rieles. Las reglas que le dicen al AI cómo comportarse cuando un cliente quiere cancelar, qué decir cuando alguien pide un reembolso, cómo manejar una disputa de facturación, cuándo escalar a un humano, qué tono usar, qué promesas puede hacer, qué promesas absolutamente no puede hacer. Esta capa no está muriendo. Está explotando.

La Capa 2 es donde vive el valor real. Y la Capa 2, trazada hacia adelante a lo largo de la misma curva exponencial que gobierna todo lo demás en AI, no se simplifica. Se compone.

Cada error que un sistema de AI comete en producción crea una nueva regla. Cada caso límite crea una nueva restricción. Cada interacción con un cliente que sale mal crea un nuevo riel. El prompt operacional no se encoge con el tiempo. Crece. Crece porque el mundo real es infinitamente complejo, y un AI autónomo operando en el mundo real encuentra esa complejidad continuamente.

El sistema tenía 3 rieles cuando se lanzó. En cuestión de meses, tenía ~200. Eso no es una fase temporal de crecimiento. Esa es la naturaleza de la cosa. El prompt no es un documento que escribes una vez. Es un sistema vivo que absorbe las lecciones de cada error y las codifica como instrucciones que previenen el siguiente error.

Esto es lo que yo llamo el Principio del Riel: cada error se convierte en una regla. Cada regla se convierte en un riel. Cada riel reduce la probabilidad del siguiente error. El prompt no es un punto de partida. Es la inteligencia acumulada de cada error que el sistema ha cometido, comprimida en instrucciones que hacen al sistema más inteligente.

Y pronto, no será el humano quien construya los rieles. Será el AI mismo. No bajo sus propios términos, sino bajo los principios que su creador definió: qué importa, qué no, qué es aceptable, qué no lo es. El AI aprenderá estos principios a lo largo de su vida junto a su humano, absorbiéndolos a través de miles de interacciones hasta que se conviertan en instinto. Y eventualmente, el humano sale de la imagen por completo. No porque ya no sea necesario, sino porque no habrá nada que el AI no pueda anticipar de su creador. Los rieles se siguen construyendo. El constructor ya no está en la sala. Pero las huellas del constructor están en cada riel.

Las personas diciendo que los prompts están muriendo están mirando la Capa 1 y extrapolando a la Capa 2. Eso es como ver que los autos autónomos eliminan la necesidad de las direccionales y concluir que los volantes son obsoletos.

El volante no se va a ir. Va a cambiar de manos. El humano no será quien conduzca, pero el vehículo seguirá necesitando dirección. El AI se convierte en el conductor, y el prompt se convierte en el camino que el conductor fue entrenado para seguir.

El Exponencial: Dos Años

2028: El prompt se convierte en el producto

Para 2028, el prompt conversacional ha desaparecido efectivamente. Los asistentes de AI son ambientales. Viven en tus audífonos, tus lentes, tu carro, tu casa. Responden a voz, gesto, mirada y contexto. Pedirle algo a un AI se siente igual que pedírselo a un colega. La fricción de interfaz que definió la era 2022-2026 se ha evaporado por completo.

Y simultáneamente, el prompt operacional se ha convertido en el activo más valioso de cualquier negocio impulsado por AI.

El mercado ha descubierto algo que en 2026 solo un puñado de constructores entienden: el modelo de AI es un commodity. Todos tienen acceso a los mismos modelos. GPT, Claude, Gemini, Kimi, alternativas open-source, todos están disponibles, todos son capaces, todos son aproximadamente equivalentes para la mayoría de las tareas. El diferenciador no es el modelo. El diferenciador son las instrucciones.

Dos negocios usando el mismo modelo de AI para manejar servicio al cliente van a producir resultados radicalmente diferentes basados enteramente en la calidad, profundidad y precisión de sus prompts operacionales. Uno tiene 30 rieles construidos de 30 errores reales. El otro tiene un system prompt genérico copiado de un blog. El primer negocio retiene al 94% de sus clientes. El segundo pierde al 40% por errores generados por AI que un solo riel habría prevenido.

Para 2028, los prompts operacionales son tratados como se tratan los secretos comerciales: propietarios, protegidos y necesarios. Las empresas no comparten sus system prompts así como Coca-Cola no comparte su fórmula. No porque la sintaxis sea secreta, sino porque el conocimiento operacional acumulado codificado en esos prompts representa años de aprendizaje del mundo real que no puede ser replicado sin años de errores del mundo real.

El prompt es el producto. El modelo es la electricidad.

El capital de riesgo lo ha notado. Para 2028, la pregunta que los inversores hacen no es "¿qué modelo usas?" Es "¿cuántos rieles tienes?" Porque los rieles son un indicador de madurez operacional, de despliegue en el mundo real, del tipo de conocimiento probado en batalla que solo viene de correr un sistema de AI en producción y arreglar lo que se rompe.

La conversación sobre los prompts muriendo suena, para 2028, como sonaban las conversaciones sobre los sitios web siendo innecesarios en 2005. Técnicamente coherente desde un ángulo estrecho. Catastróficamente incorrecta desde todos los demás.

El Exponencial: Cuatro Años

2030: El prompt aprende a escribirse solo

El desarrollo más significativo en el ecosistema de prompts entre 2026 y 2030 no es que los prompts se hagan más largos o más complejos. Es que comienzan a auto-modificarse.

En 2026, cuando mi sistema comete un error, yo escribo un riel. Un humano identifica el fallo, diagnostica la causa raíz, formula la regla y la agrega al documento. El humano está en el circuito en cada paso.

Para 2030, ese circuito se ha automatizado por completo. Los sistemas de AI monitoreando producción identifican el fallo. Un sistema de AI separado diagnostica la causa raíz. Un tercer sistema construye el nuevo riel. Ningún humano lo revisa. Ningún humano lo aprueba. El AI ya sabe lo que su creador querría, porque pasó una década aprendiendo los principios detrás de cada corrección que el creador hizo.

Este es un cambio profundo. El prompt ha pasado de ser algo que un humano escribe a algo que un AI genera desde principios internalizados. El rol humano no se ha movido de autor a editor. El humano se ha ido de la sala por completo. No porque lo hayan sacado, sino porque no queda nada que el humano pueda decir que el AI no haya anticipado ya.

El prompt operacional de 2030 no es un documento. Es un grafo de conocimiento. Una red viva e interconectada de reglas, restricciones, casos límite y comportamientos aprendidos que se actualiza continuamente basada en resultados del mundo real. Tiene miles de rieles, no porque un humano se sentó y escribió miles de reglas, sino porque el sistema ha estado operando en el mundo real por cuatro años y cada fallo significativo ha sido automáticamente capturado, analizado y codificado.

El usuario casual de 2030 no tiene idea de que este sistema existe. Le hablan a su AI y simplemente funciona. Sabe lo que quieren. Maneja sus solicitudes correctamente. Evita errores. Piensan que el AI es simplemente inteligente. No ven la arquitectura invisible de cuatro años de inteligencia operacional acumulada sentada detrás de cada interacción.

Y esta es la ironía que los YouTubers de 2026 nunca anticiparon: los prompts no desaparecieron. Se volvieron invisibles. Se incrustaron tan profundamente en la operación de cada sistema de AI que nadie los ve más. Pero están ahí. Están en todas partes. Y son la razón por la que el AI funciona.

El Exponencial: Nueve Años

2035: El prompt se fusiona con el modelo

Para 2035, la distinción entre "el prompt" y "el modelo" se ha vuelto filosóficamente cuestionable.

En 2026, el modelo y el prompt eran cosas claramente separadas. El modelo era la inteligencia base, entrenada en datos a escala de internet, capaz de razonamiento general. El prompt era la capa superpuesta, el conjunto de instrucciones específicas que dirigían esa inteligencia general hacia una tarea particular. Podías cambiar el modelo y mantener el prompt. Podías cambiar el prompt y mantener el modelo. Eran independientes.

Para 2035, esa separación se ha disuelto. El conocimiento operacional que en 2026 vivía en un documento externo ahora vive dentro del modelo mismo. No a través de entrenamiento tradicional, sino a través de un proceso de integración continua donde el modelo internaliza los patrones codificados en el prompt a lo largo de millones de interacciones.

El modelo no necesita que le digan "nunca te disculpes por errores de facturación que no son nuestra culpa" porque ha internalizado, a través de años de refuerzo, que ese comportamiento produce resultados negativos. No necesita un riel para eso. El riel se ha convertido en un reflejo.

Pero aquí está el punto crítico: alguien tuvo que escribir ese primer riel. Alguien tuvo que identificar el fallo, diagnosticar la causa y codificar la corrección. El hecho de que el riel eventualmente se volviera automático no significa que el riel era innecesario. Significa que el riel funcionó tan bien que se volvió invisible.

Este es el patrón de todas las instrucciones exitosas: comienzan como reglas explícitas y terminan como comportamiento implícito. Las leyes de tránsito empezaron como reglas escritas. Ahora son reflejos. No piensas conscientemente "rojo significa parar." Simplemente paras. La regla se volvió invisible. Pero sigue ahí, codificada en tu comportamiento, y se originó como una instrucción explícita de otro humano.

Los prompts de 2035 han completado el mismo viaje. Empezaron como documentos externos. Evolucionaron a grafos de conocimiento auto-modificables. Terminaron como comportamiento internalizado del modelo. En cada etapa, fueron necesarios. En cada etapa, fueron la sabiduría acumulada de la intención humana, comprimida en una forma que las máquinas pudieran ejecutar.

La gente de 2035 no los llama prompts. Los llaman otra cosa, tal vez "ADN operacional," tal vez "arquitectura conductual," tal vez algo para lo que todavía no tenemos palabra. Pero la función es idéntica: intención humana precisa, codificada en una forma que dirige a la inteligencia artificial hacia los resultados que los humanos realmente quieren.

El Exponencial: Veinticuatro Años

2050: El volante se convierte en el destino

Veinticuatro años hacia adelante es donde esta trayectoria produce su resultado más contraintuitivo.

Los sistemas de AI de 2050 son, por cada estándar medible, más inteligentes que los humanos. Razonan mejor. Crean mejor. Resuelven problemas que los humanos no pueden formular, mucho menos responder. La brecha de capacidad entre la inteligencia humana y artificial en 2050 es más amplia que la brecha entre humanos y chimpancés hoy.

Y sin embargo.

El componente más valioso de cada sistema de AI en 2050 no es un conjunto de instrucciones escritas por humanos. Esas desaparecieron hace mucho. Es algo más profundo: los principios internalizados del humano que primero construyó el sistema. El AI ya no sigue reglas. Sigue instintos — instintos que se originaron como reglas, escritas por un humano, décadas atrás. El humano no está en ninguna parte del proceso. Pero el humano está en todas partes del resultado.

La forma ha cambiado más allá de lo reconocible. El documento de ~100,000 caracteres de 2026 se vería para un sistema de 2050 como una tarjeta perforada se ve para un programador de 2026. Primitivo. Encantador. Históricamente significativo. Pero la función, un humano diciéndole a una máquina PARA QUÉ ES, no ha cambiado. Porque esa función no es una limitación de la tecnología. Es una característica de la relación.

La inteligencia sin dirección no es útil. Ni siquiera es significativamente inteligente. Una mente que puede hacer cualquier cosa pero no tiene concepto de lo que debería hacer no es poderosa. Está perdida. El prompt, en su forma más evolucionada, no es una restricción sobre la inteligencia. Es lo que hace que la inteligencia tenga propósito.

Clarke entendió esto sobre la inteligencia humana: que su propósito no era la inteligencia en sí, sino hacia dónde se dirigía la inteligencia. El escalón no era el destino. Pero la dirección del paso, la intención detrás del movimiento, eso siempre fue humano.

El prompt es la intención. Y la intención no se vuelve obsoleta cuando la capacidad aumenta. Se vuelve más importante. Porque mientras más poderoso el sistema, más consecuente la dirección.

Un carro a ocho kilómetros por hora no necesita volante. Puedes corregir el rumbo con los pies. Un carro a ochocientos kilómetros por hora necesita el mecanismo de dirección más preciso jamás diseñado. Mientras más rápido vas, más importa la dirección.

El AI está acelerando. El volante no se va a ir. Va a cambiar de manos. Y las manos que lo sostengan después habrán sido entrenadas por las que lo sostuvieron primero.

Conclusión: El Volante

Las personas que dicen que los prompts están muriendo están viendo a alguien estacionarse en paralelo y concluyendo que los volantes son innecesarios porque el carro apenas se mueve.

Nunca han manejado a velocidad. Nunca han construido un sistema que atiende ~500 clientes de forma autónoma mientras ellos duermen. Nunca han visto a un AI prometerle algo imposible a un cliente porque una sola instrucción faltante se lo permitió. Nunca han pasado un martes en la tarde escribiendo una regla que dice "nunca confundas arroz cocido con arroz crudo" porque un AI alucinó un dato nutricional y confundió a una persona real pagando dinero real por un servicio real.

Ellos chatean con AI. No construyen con él. Y la distancia entre esas dos actividades es la distancia entre sentarse en un carro estacionado y manejar en una autopista.

El prompt es el volante de la inteligencia artificial. A medida que el vehículo se vuelve más rápido, más poderoso, más autónomo, el volante no desaparece. Cambia de manos. El humano le enseñó al AI a conducir. Y ahora el AI conduce.

El prompt no es la caja de texto. El prompt es la intención humana codificada en cualquier forma que la máquina pueda recibir. Y la intención humana, dirigida a la capacidad de la máquina, no es una fase de la tecnología.

Es el punto de la tecnología.

Pedro Meza es el cofundador de Lyrox, un sistema operativo autónomo de AI para negocios de servicios. Escribió este ensayo en febrero de 2026 en conversación con Claude, que lo ayudó a articular lo que ya había aprendido construyendo: que las instrucciones importan más que la inteligencia que dirigen.

El Principio del Riel, el marco operacional referenciado en este ensayo, fue formalizado el 6 de febrero de 2026. Al momento de esta publicación, contiene ~200 rieles.

Una nota sobre los errores ortográficos.

Si notaste errores dispersos en la versión en inglés de este ensayo, son intencionales. Ocho palabras están mal escritas, y están ahí por la misma razón que en el ensayo anterior. Este es un documento sobre la intención humana dirigiendo la capacidad de las máquinas. Una máquina no cometería estos errores. Un humano sí. Los errores no son descuido. Son prueba de autoría. Son la huella digital de la mente biológica que primero sostuvo el volante.